video upload by Acids Team - Ircam

Note Artisan Electronics has a Neurorack module system (4:52 here), however it does not appear to be AI based. There is also this user labeled Eurorack system with "brainwaves/EEG signals as source of modulation (the Neuro portion of it)", but again no AI (there is also soundmachines' BI1brainterface (no AI). (Update: there was also the Hartmann Neuron and Jomox Neuronium, both based on neuronal networks.) As for AI applied to synthesis in general, you can find posts mentioning artificial intelligence here. I believe the earliest reference would be John Chowning in 1964: "Following military service in a Navy band and university studies at Wittenberg University, Chowning, aided by Max Mathews of Bell Telephone Laboratories and David Poole of Stanford, set up a computer music program using the computer system of Stanford University's Artificial Intelligence Laboratory in 1964."

Description for the above video:

"The Neurorack is the first ever AI-based real-time synthesizer, which comes in many formats and more specifically in the Eurorack format. The current prototype relies on the Jetson Nano. The goal of this project is to design the next generation of music instrument, providing a new tool for musician while enhancing the musician's creativity. It proposes a novel approach to think and compose music. We deeply think that AI can be used to achieve this quest. The Eurorack hardware and software have been developed by our team, with equal contributions from Ninon Devis, Philippe Esling and Martin Vert."

https://github.com/ninon-io/Impact-Synth-Hardware/

http://acids.ircam.fr/neurorack/

More information

Motivations

MotivationsDeep learning models have provided extremely successful methods in most application fields, by enabling unprecedented accuracy in various tasks, including audio generation. However, the consistently overlooked downside of deep models is their massive complexity and tremendous computation cost. In the context of music creation and composition, model reduction becomes eminently important to provide these systems to users in real-time settings and on dedicated lightweight embedded hardware, which are particularly pervasive in the audio generation domain. Hence, in order to design a stand alone and real time instrument, we first need to craft an extremely lightweight model in terms of computation and memory footprint. To make this task even more easier, we relied on the Nvidia Jetson Nano which is a nanocomputer containing 128-core GPUs (graphical unit processors) and 4 CPUs. The compression problem is the core of the PhD topic of Ninon Devis and a full description can be found here.

Targets of our instrument

We designed our instrument so that it follows several aspects that we found crucial:

Musical: the generative model we choose is particularly interesting as it produces sounds that are impossible to synthesize without using samples.

Controllable: the interface was relevantly chosen, being handy and easy to manipulate.

Real-time: the hardware behaves as traditional instrument and is as reactive.

Stand alone: it is playable without any computer.

Model Description

We set our sights on the generation of impacts as they are very complex sounds to reproduce and almost impossible to tweak. Our model allows to generate a large variety of impacts, and enables the possibility to play, craft and merge them. The sound is generated from the distribution of 7 descriptors that can be adjusted (Loudness - Percussivity - Noisiness - Tone-like - Richness - Brightness - Pitch).

Interface

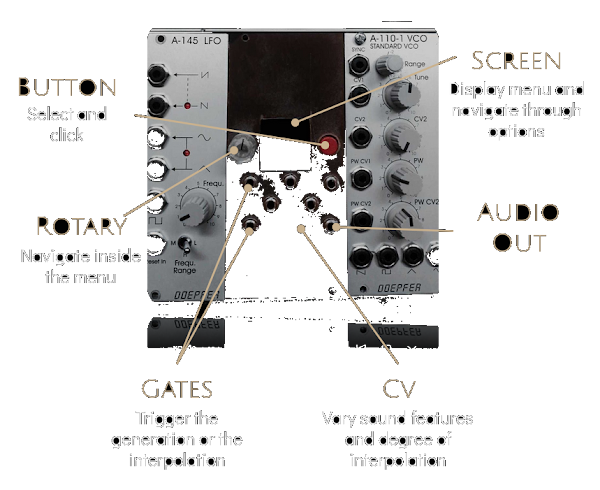

One of the biggest advantage of our module is that it can interact with other synthesizer. Following the classical conventions of modular synthesizers, our instrument can be controlled using CVs (control voltages) or gates. The main gate triggers the generation of the chosen impact. Then it is possible to modify the amount of Richness and Noisiness with two of the CVs. A second impact can be chosen to be "merge" with the main impact: we will call this operation the interpolation between two impacts. Their amounts of descriptors are melt to give an hybrid impact. The "degree of merging" is controlled by the third CV, whereas the second gate triggers the interpolation.

Perhaps the intelligence of the musician feeding brainwaves into the module is artificial.

ReplyDeleteI have an OCZ nia headset that allows seven channels of mental control over any computer.

ReplyDelete